Ahorra un 25 % (o incluso más) en tus costes de Kafka | Acepta el reto del ahorro con Kafka de Confluent

Meal Planning Agent

Leverage Confluent Data Streaming Platform for real-time communication between specialized agents–such as those managing unique meal plans for corporate customers, healthcare patients, or individual family members–for processing data streams, to dynamically generate meal plans that adapt to changing inputs and preferences.

Enable Real-Time, Adaptive Decision-Making for AI Agents

Rigid architectures face challenges around scalability, integrating diverse data sources, batch processing, and ensuring consistent and up-to-date views of data across different systems. In a multi-agent system where each agent specializes in a different task, coordination becomes a challenge – whether it’s overlapping responsibilities, missed updates, or misaligned goals.

Confluent Data Streaming Platform with event-driven architecture allows meal planning multi-agents to respond to real-time events (e.g., schedule changes, customer preference updates). Contextualized and trustworthy streaming data acts as a shared language that helps agents stay synchronized, react quickly, and handle failures.

Personalized meal plans based on the real-time information for greater customer satisfaction

Operational savings with a fully managed platform, automated meal planning, and waste reduction

Scalability for real-time data processing to handle expanding use cases without performance bottlenecks

Adaptability to changing preferences and dietary needs for continuous relevance in meal planning

Build with Confluent

This use case leverages the following building blocks in Confluent Cloud:

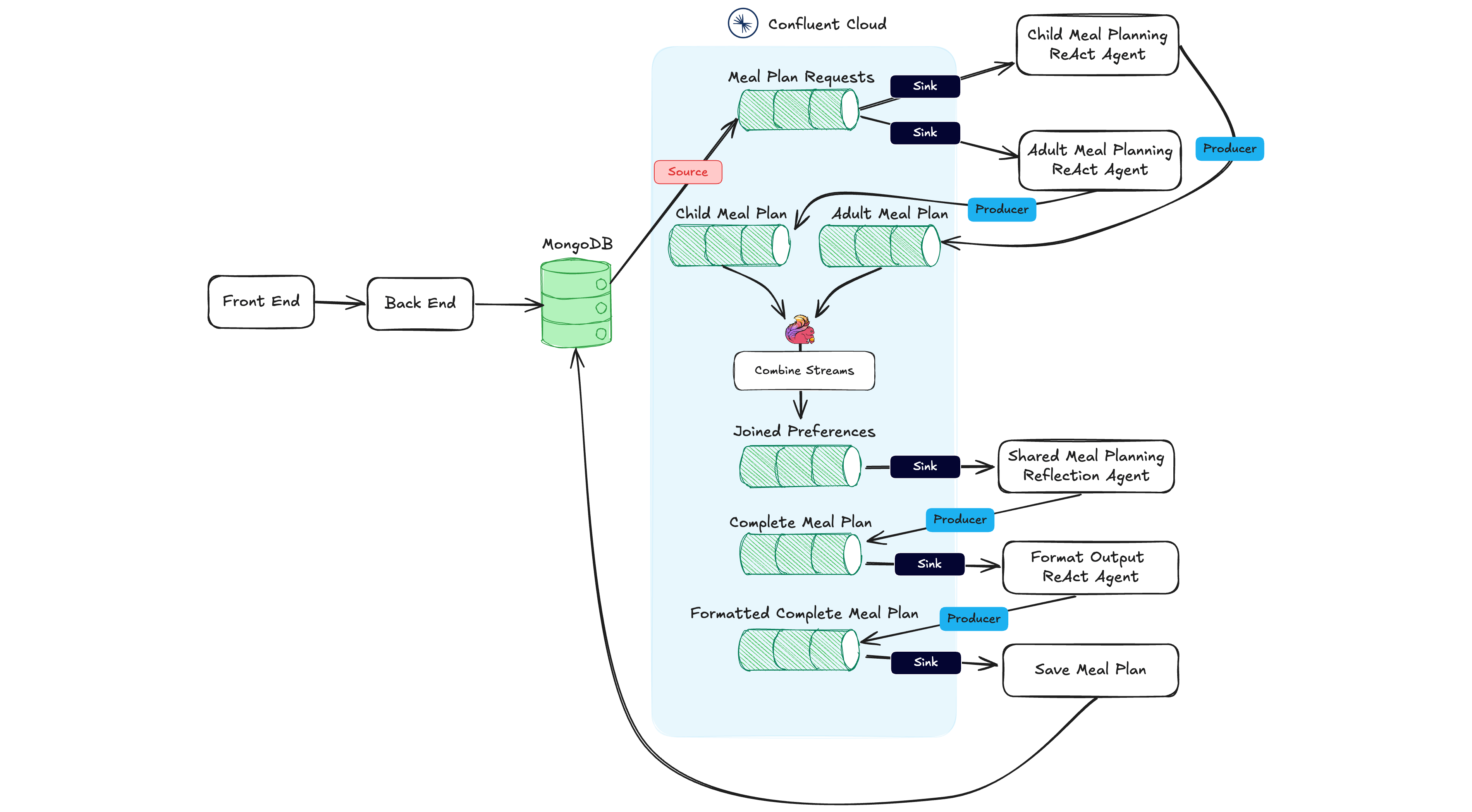

Reference Architecture

This architecture shows how a data streaming platform helps coordinate a team of agents to solve a problem – Confluent acts as a shared communication and orchestration channel for the agents where the user-facing web application doesn't need to know anything about AI. Agents are designed to emit and listen for events. Events are signals that something has happened, to which agents can respond.

Continuously stream real-time records from data sources such as MongoDB and sink outputs to a database of choice. Use Confluent’s large portfolio of 120+ pre-built connectors (including change data capture) to quickly connect data systems and applications.

Use Flink stream processing to combine data streams and create a joined, enriched preferences topic that multi-agents can act on to produce a customized meal plan and grocery list.

Leverage Stream Governance to ensure data quality, trustworthiness, and compliance. Schema Registry helps define and enforce data standards that enable data compatibility at scale, allowing AI agents to effectively process, interpret, and act on information across heterogeneous systems.