Ahorra un 25 % (o incluso más) en tus costes de Kafka | Acepta el reto del ahorro con Kafka de Confluent

Shift Left: Headless Data Architecture, Part 1

The headless data architecture is an organic emergence of the separation of data storage, management, optimization, and access from the services that write, process, and query it. With this architecture, you can manage your data from a single logical location, including permissions, schema evolution, and table optimizations. And, to top it off, it makes regulatory compliance a lot simpler, because your data resides in one place, instead of being copied around to every processing engine that needs it.

We call it a “headless” data architecture because of its similarity to a “headless server,” where you have to use your own monitor and keyboard to log in. If you want to process or query your data in a headless data architecture, you will have to bring your own processing or querying “head” and plug it into the data—for example, Trino, Presto, Apache Flink®, or Apache Spark™.

A headless data architecture can encompass multiple data formats, with data streams and tables as the two most common. Streams provide low-latency access to incremental data, while tables provide efficient bulk-query capabilities. Together, they give you the flexibility to choose the format that is most suitable for your use cases, whether it’s operational, analytical, or somewhere in between.

First, let’s take a look at streaming in the headless data architecture.

Streams for a headless data architecture

Apache Kafka®, an open source distributed event-driven streaming platform, has had a headless data model since day one. Kafka provides the API, the data storage layer, access controls, and basic metadata about the cluster. A producer writes about a topic, and then one or more consumers can read the data from that topic at their own pace.

The producer acts as a fully independent head. It may be written in Go, Python, Java, Rust, or C language (and more), and it can also use popular stream processing frameworks like Kafka Streams or Apache Flink. Meanwhile, your consumers are similarly independent. Perhaps one of your consumers is a Kafka Connect instance, while another is Python, and a third is written in C.

Full streaming support for the headless data architecture requires additional functionality. For one, events need well-defined explicit schemas for reliability and safety, as provided and enforced by a schema registry. You’ll also need a metadata catalog to track ownership, manage tags and business metadata, and provide browsing, discovery, and lineage capabilities.

Streams are commonly used to drive operational use cases, like fulfilling e-commerce orders, ordering inventory, and orchestrating the complex workflows that underpin businesses. And while you may choose to use streams to build analytical use cases, you may instead rely on periodic batch-based computations built off of tables. So how do we integrate tables into the headless data architecture?

Tables for a headless data architecture

Tables have long been a staple of data lakes and data warehouses, but have historically been defined by the proprietary database. If you wanted to query a table, you had to use the database engine that stored the table in the first place.

Today, we rely on popular open-source formats like Apache Parquet™ to provide clean definitions of the underlying data. But the definition of the table remains independent of the underlying files, and for this we look to an increasingly popular technology known as Apache Iceberg, an open source data management project. Iceberg is a robust and powerful file system manager for columnar data (including Parquet), and it provides several key components for enabling tables in a headless data architecture.

Apache Iceberg key components:

The first component is table storage and optimization. Iceberg stores all the data for building tables, typically using readily available cloud storage like Amazon S3. Iceberg manages the storage and maintenance of the data, including optimizations like file compaction and versioning.

The Iceberg catalog, which contains metadata, schemas, and table information, such as what tables you have and where they are. You declare your tables in your Iceberg catalog, such that you can plug in your processing and query engines to access the underlying data.

Transactions. Iceberg supports transactions and concurrent reads and writes so that multiple heads can do heavy-duty work without affecting each other.

Iceberg provides time travel capabilities. You can execute queries against a table at a specific point in time, which makes Iceberg very useful for auditing, bug fixing, and regression testing.

Iceberg provides a central pluggable data layer. You can plug in your open source options like Flink, Trino, Presto, Hive, Spark, and DuckDB, or popular SaaS options like BigQuery, Redshift, Snowflake, and Databricks.

How you integrate with these services varies, but typically relies on replicating metadata from the Iceberg catalog, so your processing engine can figure out where the files are, and how to query them. Consult your processing engine’s documentation for Iceberg integration for more information.

Benefits of a headless data architecture

So what are the main benefits of a headless data architecture?

You don’t have to copy data around anymore, saving lots of money and time. For example, AWS users can plug their tables into Athena, Snowflake, and Redshift, all without moving their data anywhere.

You don’t have to coordinate multiple copies of data anymore, eliminating similar-yet-different datasets, which in turn leads to fewer data pipelines.

You can choose whatever head is most suitable for the job, like Flink for one, but DuckDB for the other. Because your data is abstracted away from the processing engines, you’re no longer “stuck” with one processor or another. You aren’t locked into any particular engine simply because you loaded data into it years ago.

With a single point of access control, you can control access at the data layer for all processors. You can opt for more granular control in the case of private and financial information, to make sure that data remains secure.

Notable differences between headless and data lake architectures

There are three critical differences between the headless data architecture and a data lake architecture.

In a headless data architecture, any service can use the data. This doesn’t matter if it’s analytical, operational, or somewhere in between. Headless architecture is about making data access easy and pluggable to where you need it, and isn’t limited to just analytical tool sets.

You can use tables, streams, or both—it’s entirely up to you, based on your business use cases and needs.

A headless data architecture does not require you to copy all of your data to one central location. It is common to compose your data layer from different data sources, making a modular data layer.

Further, a headless data architecture enables you to build data lakes and warehouses. You simply plug in your Iceberg tables into the data lake or data warehouse, registering it as an external table. You are of course free to set up a pipeline to copy headless data into your lakes or warehouses, but headless gives you the same advantages with no copying required.

Modularity, reusability, structure, and easy access to both streams and tables remain key features of the headless data architecture. Whatever you choose to do with that data once it’s in your data lake boundary is entirely up to you.

How to build a headless data architecture

Making headless data architecture a reality requires investing in the headless data layer. Many businesses today are building their own headless data architectures, even if they’re not quite calling it that yet, though using cloud services tends to be the easiest and most popular way to get started. If you’re building your own headless data architecture, it’s important to first create well-organized and schematized data streams, before populating them into Apache Iceberg tables.

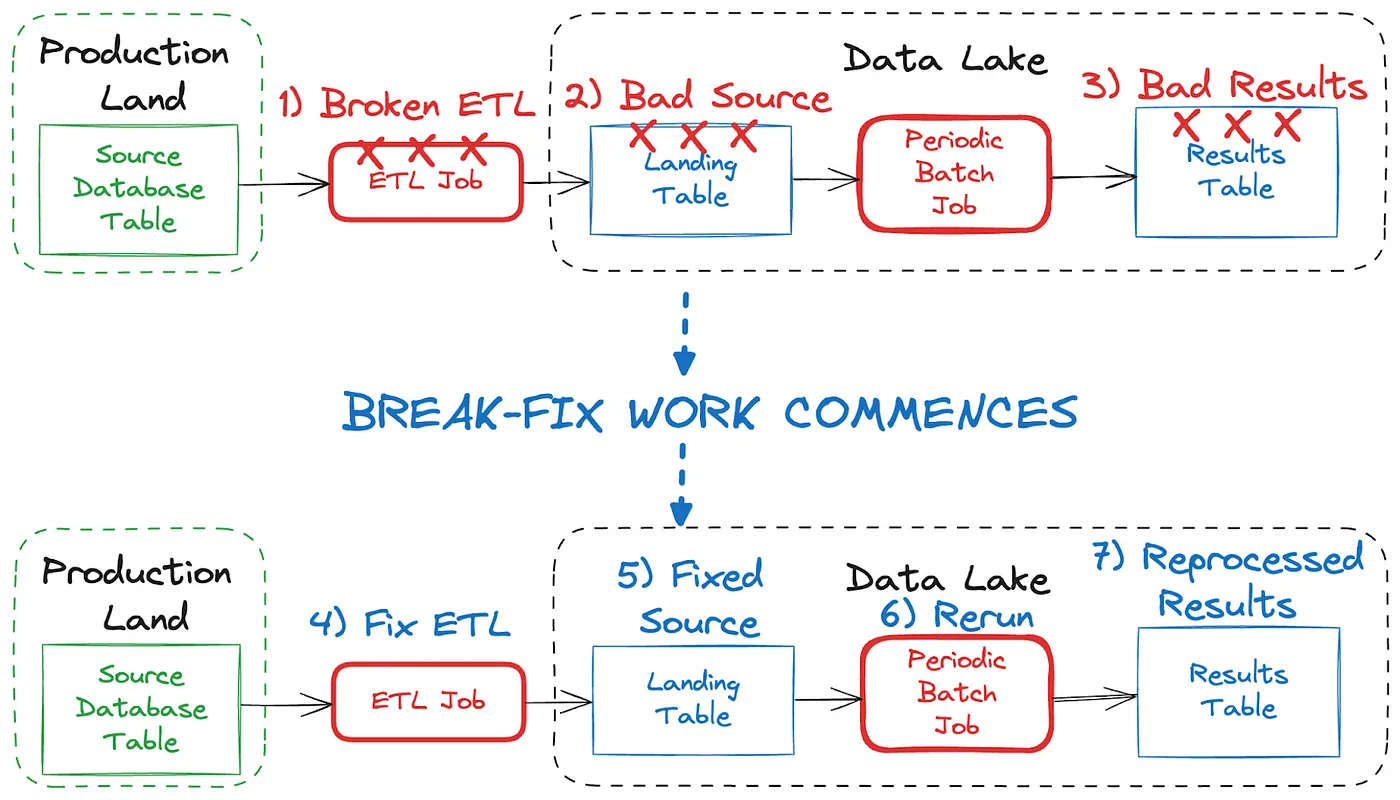

Connectors (such as Kafka Connect) are commonly used to convert your data streams into Iceberg tables. But you can also rely on managed services that automatically materialize your topics into append-only Iceberg tables for no break-fix work, no pipelines, just using the same data available as a stream or as a table.

Finally, you can plug your data streams and tables into whatever data lake, data warehouse, processor, query engine, reporting software, database, or application framework you need it in.

You’ll also need to provide support for the processing heads of your choice. Some processors can plug directly into the Iceberg catalog, providing immediate access to the data. Proprietary processing engines, like those in major cloud providers, usually require a copy of the Iceberg metadata to enable processing. You’ll need to check your documentation to ensure correctness.

While it may seem a bit daunting, the reality is that you’re likely only going to use one or two different heads at the start. In the second blog in this series, we’ll go over a more detailed approach of how to implement a headless data architecture, including shifting data formalization to the “left” to make it more accessible and reliable to all who need it.

¿Te ha gustado esta publicación? Compártela ahora

Suscríbete al blog de Confluent

Data Products, Data Contracts, and Change Data Capture

Change data capture is a popular method to connect database tables to data streams, but it comes with drawbacks. The next evolution of the CDC pattern, first-class data products, provide resilient pipelines that support both real-time and batch processing while isolating upstream systems...

Shift Left: Bad Data in Event Streams, Part 1

At a high level, bad data is data that doesn’t conform to what is expected, and it can cause serious issues and outages for all downstream data users. This blog looks at how bad data may come to be, and how we can deal with it when it comes to event streams.