Ahorra un 25 % (o incluso más) en tus costes de Kafka | Acepta el reto del ahorro con Kafka de Confluent

Use Cases

Introducing Confluent Private Cloud: Cloud-Level Agility for Your Private Infrastructure

Confluent Private Cloud (CPC) is a new software package that extends Confluent’s cloud-native innovations to your private infrastructure. CPC offers an enhanced broker with up to 10x higher throughput and a new Gateway that provides network isolation and central policy enforcement without client...

Queues for Apache Kafka® Is Here: Your Guide to Getting Started in Confluent

Confluent announces the General Availability of Queues for Kafka on Confluent Cloud and Confluent Platform with Apache Kafka 4.2. This production-ready feature brings native queue semantics to Kafka through KIP-932, enabling organizations to consolidate streaming and queuing infrastructure while...

New in Confluent Intelligence: A2A, Multivariate Anomaly Detection, Vector Search for Cosmos DB, Amazon S3 Vectors, and More

Explore new Confluent Intelligence features: A2A integration, multivariate anomaly detection, vector search for Cosmos DB and S3 Vectors, Private Link, and MCP support.

How to Implement Your First ML Function in Streaming

Learn how to add your first ML model to a real-time streaming pipeline. Learn a simple, low-risk pattern for inference, scoring, and deployment with Apache Kafka®.

Sustainable Streaming Architectures: A GreenOps Guide to Efficient, Low-Carbon Data Systems

Design energy-efficient, low-cost streaming systems. Learn GreenOps patterns to reduce compute waste, optimize storage, and lower the carbon footprint of real-time data.

How to Build Autonomous Data Systems for Real-Time Decisioning

Learn how real-time decisioning and autonomous data systems enable organizations to act on data instantly using streaming, automation, and AI.

How to Break Off Your First Microservice

Learn how to safely break off your first microservice from a monolith using a low-risk, incremental migration approach.

How to Future-Proof Architectures With Continuous Availability Via Hybrid & Multicloud

Learn how to design future-proof architectures for hybrid and multicloud environments, balancing portability, resilience, and long-term flexibility and using Kafka to implement continuous availability.

How to Monetize Enterprise Data: The Definitive Guide

Learn how to design and implement a real-time data monetization engine using streaming systems. Get architecture patterns, code examples, tradeoffs, and best practices for billing usage-based data products.

How to Build Real-Time Compliance & Audit Logging With Apache Kafka®

Learn how to build a real-time compliance and audit logging pipeline using Apache Kafka® or the Confluent data streaming platform with architecture details and best practices including schemas, immutability, retention, and more.

The BI Lag Problem and How Event-Driven Workflows Solve It

Learn how to automate BI with real-time streaming. Explore event-driven workflows that deliver instant insights and close the gap between data and action.

How Does Real-Time Streaming Prevent Fraud in Banking and Payments?

Discover how banks and payment providers use Apache Kafka® streaming to detect and block fraud in real time. Learn patterns for anomaly detection, risk mitigation, and trusted automation.

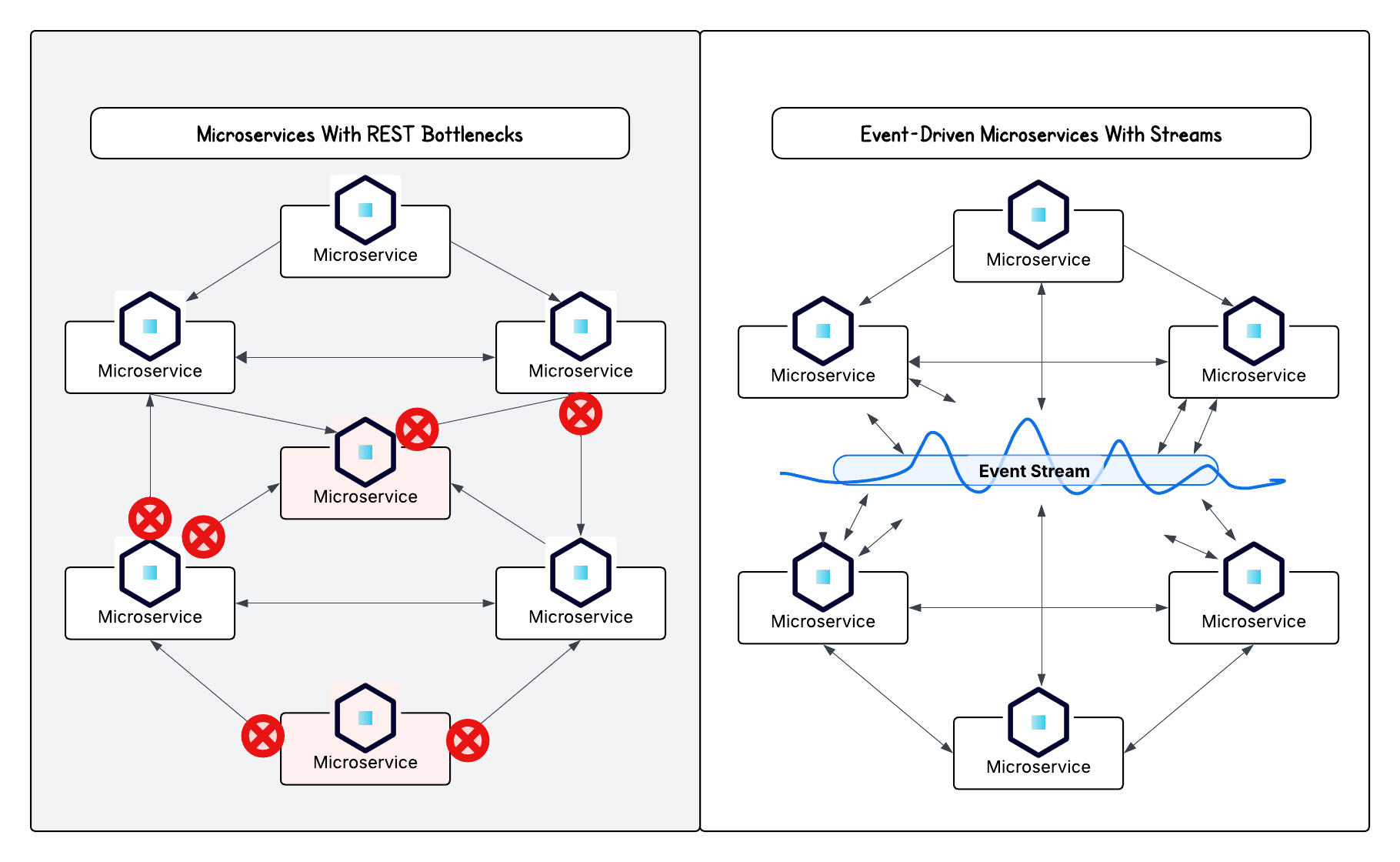

Do Microservices Need Event-Driven Architectures?

Discover why microservices architectures thrive with event-driven design and how streaming powers applications that are agile, resilient, and responsive in real time.

Making Data Quality Scalable With Real-Time Streaming Architectures

Learn how to validate and monitor data quality in real time with Apache Kafka® and Confluent. Prevent bad data from entering pipelines, improve trust in analytics, and power reliable business decisions.

How to Build Real-Time Alerts to Stay Ahead of Critical Events

Learn how to design real-time alerts with Apache Kafka® using alerting patterns, anomaly detection, and automated workflows for resilient responses to critical events.

How to Build Real-Time Apache Kafka® Dashboards That Drive Action

Learn how to build real-time dashboards with Apache Kafka® that help your organization go beyond simple data visualization and analysis paralysis to instant analysis and action.

3 Strategies for Achieving Data Efficiency in Modern Organizations

The efficient management of exponentially growing data is achieved with a multipronged approach based around left-shifted (early-in-the-pipeline) governance and stream processing.